AI & Systems

Context-Aware AI Walking Meditation (Smart Glasses)

Designed an AI-driven walking meditation experience that dynamically adapts guidance based on environmental context detected through smart glasses.

- Contextual prompt architecture: structured prompt engineering translating environmental signals into psychologically coherent guidance

- Latency-aware experience design: interaction pacing and response framing designed to accommodate real-world model delays

- Hallucination mitigation through design: constrained prompting, environmental grounding and structured output formats

- Evaluation programme: large-scale UX testing and comparative benchmarking of latency and contextual accuracy against baseline AI models

Context-aware AI pipeline translating environmental signals into real-time guided meditation.

AI-enabled Knowledge Base

Developed a design tool integrating academic literature, internal experimental findings and structured LLM reasoning into a unified knowledge layer for product teams.

- Multi-source synthesis: research evidence, internal data and model reasoning combined into structured outputs

- Accuracy controls: constrained prompting and evidence grounding to reduce hallucination risk

- Design translation layer: converts psychological principles into actionable product recommendations

- Team-aware guidance: suggests feasible designs aligned to available developer and artist skill sets

Research evidence, internal data and LLM reasoning integrated into structured design intelligence.

Categorisation Engine

Built a system to automatically analyse and tag VR experiences at scale, turning design features into structured, analytical data.

- Design dimensions tracked: colour, visual complexity, cognitive load, music, pacing, scene changes, interactivity and more

- Automated analysis: extracts visual and audio features across large content libraries

- Consistent framework: standardised categories allowing direct comparison between experiences

- Scalable system: updates automatically as new experiences are added

- Connected to user data: links design features to engagement and performance metrics

Automated classification of immersive design features into structured data.

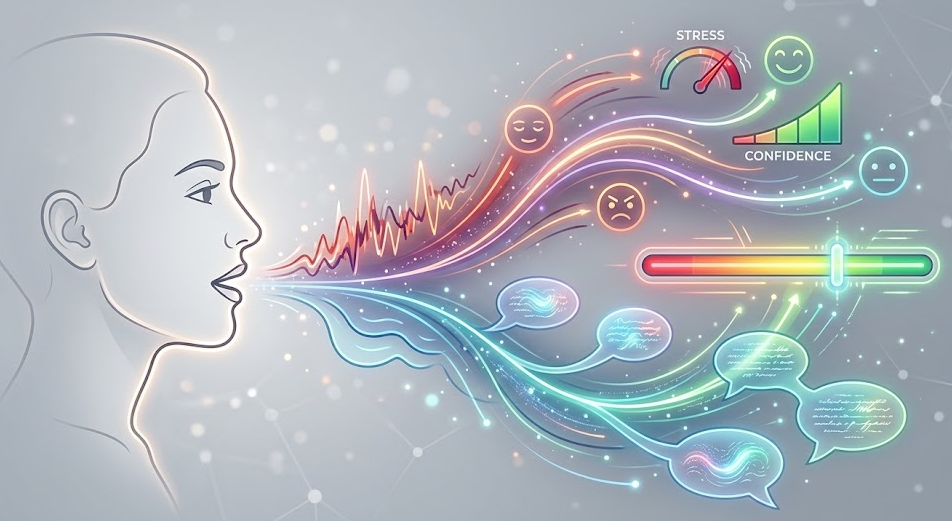

Real-Time Voice Analysis for Soft Skills Training

Contributed to the development of a real-time voice analysis system embedded within soft skills training applications, enabling users to receive live feedback on their communication performance.

- Emotional voice analysis: detection of vocal cues linked to a person's emotional state

- Sentiment analysis: speech-to-text conversion followed by natural language sentiment evaluation

- Live performance feedback: real-time insights delivered during presentations and difficult conversation simulations

- Behavioural integration: feedback designed to encourage immediate performance adjustment and skill refinement

Real-time emotional and sentiment analysis integrated into behavioural training environments.